This is a concise yet insightful analysis of the future of AI that Martin Waxman shared on LinkedIn. It reflects my views of and experience with AI. This comment is spot on: users are not sparring with AI, engaging in provocative discussions, asking it to challenge their assumptions and ideas. They accept what it gives them and move on, choosing the easy way out.

Martin is an adjunct professor at the York University Schulich School of Business, and associate director of the School’s Future of Marketing Institute, a global think tank that examines and analyses tech trends and their implications to marketing and communications.

Here’s a link to a video of the proceedings. (Martin’s panel starts around 10:00 a.m.)

100% Human

Chairman Smith, Deputy Chair Dasko, and Honorable Senators,

My name is Martin Waxman, and I want to certify that my remarks today are 100% human, and no AI was used to prepare them. That may sound like a funny way to begin, but I believe this type of disclosure is one way to maintain trust, as we establish guidelines around acceptable AI use cases for content and creativity.

I am an adjunct professor at the York University Schulich School of Business, and associate director of the School’s Future of Marketing Institute, a global think tank that examines and analyses tech trends and their implications to marketing and communications.

I write an AI and Digital Marketing Trends newsletter that has over 500,000 subscribers and create courses on generative AI for LinkedIn Learning.

I also train students and marketing and communications professionals how to use AI beyond simple content generation. And how to develop detailed and structured prompts that combine natural-language and systems thinking.

In addition, I’ve also published two books of fiction and am a former film and television writer. My background is in the arts, not coding.

So, I appear before you today highly conflicted about the role AI will play in content production and distribution and its effect on writers and creators.

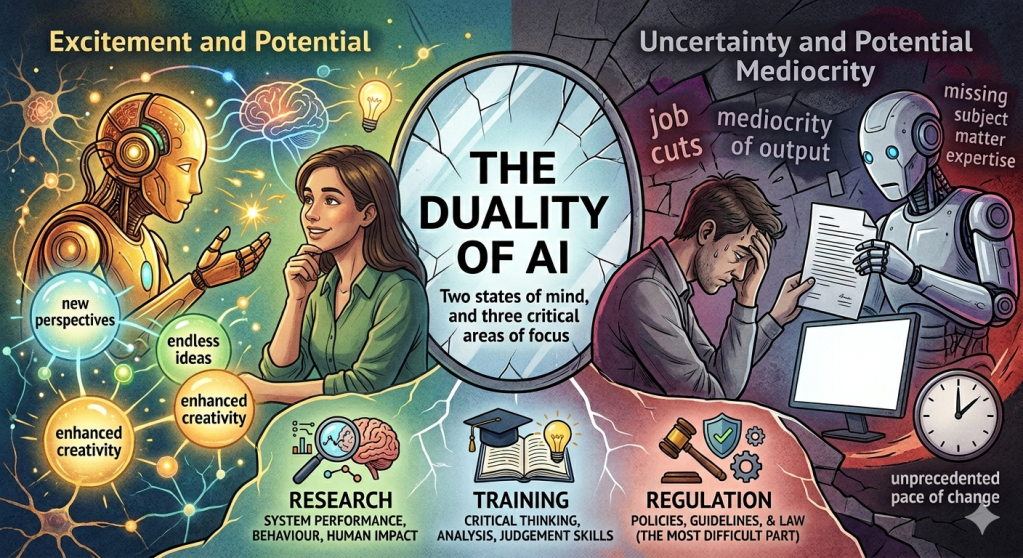

The Duality of AI

On one hand, I am very excited. On the other, I’m unsure how to deal with the unprecedented pace of change and uncertainty we’re all living through every day.

I can see incredible potential for AI to help provide writers with an endless array of new ideas and perspectives they would not have otherwise seen.

I also know that in the creative industries, like marketing and PR, professionals are under a great deal of pressure to produce larger and larger quantities of content. And that generative AI has the potential to take a good part of the load off their shoulders.

Yet, when I watch how people use AI – including my students – I am often disheartened by how much thinking they outsource to a machine. And that, along with the many dire predictions around job cuts frightens me.

You see, rather than having a challenging conversation with a large language model, many people use AI as a shortcut to avoid the difficult task of thinking. They make a generic request, have a brief exchange and get an output that looks good enough, but lacks the quality and spark of something unique. And they’re unable to see beyond the mediocrity of their output because they’re missing subject matter expertise.

They’re not sparring with AI, engaging in provocative discussions, asking it to challenge their assumptions and ideas. They accept what it gives them and move on, choosing the easy way out.

I’m concerned many people, and especially new professionals, will miss out on the valuable experience they get by anguishing over a blank screen, taking the time to reflect on an idea, letting it percolate and revising it into a polished piece of work.

- But if we decide to offload our thinking, ideas and the creative labour involved in content production to a machine, how will young people ever develop their craft, imagination and determination?

- What role will they have in the workplace?

- I also wonder who owns the output of a piece of content that’s AI generated? How much of a contribution does a person have to make to claim authorship?

- What laws and regulation are needed to pave the way to a promising future for everyone?

These are some of the difficult questions we must discuss and debate right now.

Three Areas of Focus

And while I don’t have the answers, I believe we should start by focusing on three areas: research, training and regulation.

Research into how these systems perform. Research into their emergent behaviour, when they go off the rails, scheme and present hallucinations as facts. Research into how people are using AI and whether their minds are being stimulated or atrophied by assigning too many cognitive tasks to machines.

We need to develop and implement a formalized AI training curriculum that starts in primary school and continues throughout a person’s education. And we must put an emphasis on teaching students how to think, analyse and make judgements for themselves.

We also need to design programs to retrain the people who may be displaced by AI and help them find other purposeful jobs.

And we need to develop policies, guidelines and regulations that are codified into law. That might be the most difficult part. It was challenging enough to put rules around the internet and social media. Finding a way to regulate AI is even harder because the platforms and capabilities are evolving so rapidly.

I’m an optimist by nature. I do believe AI has the potential to make us smarter, more creative and enhance the quality of the content humans produce. But it’s incumbent on us to use it intelligently and guide its outputs rather than letting its outputs guide us.

I am happy to answer any questions you may have or to assist the committee and your study in any way today or in the future.

Thank you.

I asked https://thaura.ai/ to summarize the concerns about AI, including the environmental impact of data centers (electricity, water) and surveillance. Here’s its reply:

Based on the search results, here are the key concerns about AI’s environmental and surveillance impacts:Environmental Impact

Electricity Consumption:

Water Usage:

Broader Environmental Impacts:

Surveillance Concerns

Government and Corporate Surveillance:

Democratic Risks:

Community Impacts:

These concerns highlight the complex trade-offs in AI development, where technological advancement comes at significant environmental and social costs that are not always fully accounted for in the rush to deploy AI systems.🌱~229.1g CO₂ saved

SourcesFeedback

I followed up with a prompt about about gender, race, social class, and colonial bias. Here’s its reply:

AI systems reflect and amplify existing societal biases across gender, race, social class, and colonial dimensions:Gender Bias

Systemic Exclusion:

Content Generation Bias:

Racial Bias

Facial Recognition Failures:

Hiring and Employment:

Social Class Bias

Economic Impact:

Access and Control:

Colonial Bias

Historical Power Imbalances:

Cultural Bias:

Root Causes and Solutions

Intersectional Challenges:

Proposed Solutions:

The evidence shows that AI is not neutral—it mirrors and amplifies existing societal inequalities. Addressing these biases requires fundamental changes in who designs AI systems, what data they’re trained on, and how they’re deployed in society.🌱~502.0g CO₂ saved

SourcesFeedback